Daria MosolovaBBC Monitoring

Social Media

Social Media

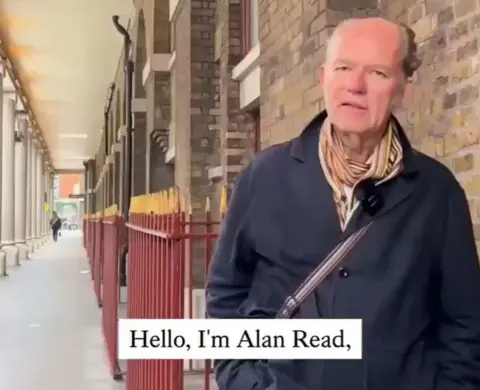

As any habitual social media user, King's College London professor Alan Read did not pay much heed to the occasional deepfakes that would flash up on his feed. Sometimes he would report them, other times he would simply scroll past.

Until one day, an obscure account tagged him in a video featuring his own face.

In it, a synthetic voice nearly identical to that of Dr Read went on a politicised tirade against French President Emmanuel Macron, berating him and other Western leaders as "aboard the Titanic which has 'European Union' written on its hull".

"Almost everything in that video is egregious, and awful to listen to," Dr Read, a seasoned professor of theatre with no connection to politics, told the BBC. "It strikes me as... utterly alien to me."

The avatar of the unwitting Dr Read appeared in a new wave of Russia-linked synthetic videos that swept across social media over the past months, raising concern among security experts that the West must brace for a battle against the Kremlin's influence on the artificial intelligence front.

"What we're seeing is not just a spike in deepfakes but a shift in how influence is produced," said Chris Kremidas-Courtney, a defence and security analyst at the European Policy Centre think-tank.

"We face systems that can generate... persuasion at scale, for pennies. To me this is a revolution in political influence and none of our current governance schemes are ready to address it".

The videos - some of which garner hundreds of thousands of views - discredit EU institutions and accuse the Kyiv government of corruption just as it vies for funding from Western partners to fight into the fifth year of a full-scale invasion from Russia.

The recent uptick comes several months after OpenAI released Sora2, the latest iteration of its video-generating software that has made a leap in the realism of the product it offers.

AFP via Getty Images

AFP via Getty Images

Competing for market share in the shadow of the tech giant, countless runner-up apps moved to attract customers by slashing prices, or waiving safety measures such as the watermarks that Sora2 brands its videos with to distinguish them from real-life footage.

"They need to draw in users," said Russian AI expert Arman Tuganbaev, adding that while OpenAI is trying to thwart attempts to create videos of specific people, "second-tier apps will give you that option".

OpenAI told the BBC it takes action against accounts that engage in deceptive activity designed to cause harm, including falsely claiming where content comes from.

The tech race has fuelled a steady increase in both the volume and sophistication of foreign influence campaigns, strengthening Russia's hand in its hybrid conflict with the west.

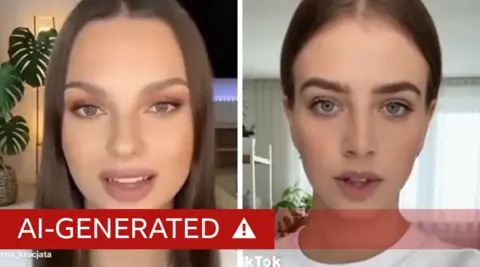

In late December, a slew of AI-generated videos went viral on TikTok, depicting young Polish women calling for "Polexit", or Poland's withdrawal from the EU.

"There is no doubt that this is Russian disinformation," said Adam Szlapka, Poland's government spokesman. "If someone looks closely, they can spot Russian syntax in these videos."

Poland called on the European Commission to investigate TikTok over the incident.

TikTok, which has since removed the clips and the accounts that posted them, said it had taken down more than 75 covert influence operations globally in 2025.

Social media

Social media

In the UK, MPs have discussed concerns that Russian deepfakes could influence local elections in May.

"We have seen them used extensively in elections around the world, so there is no reason to assume Britain would be an exception," Vijay Rangarajan, the chief executive of the UK Electoral Commission, told lawmakers.

Britain's Online Safety Act does not explicitly classify disinformation as a harm. It does oblige platforms to remove the material they can prove to be foreign influence - a process that often takes too long in an online environment where videos can go viral within hours.

The posts are hard to trace to their origin, but Western researchers say many share common traits - from stylistic cues to distribution patterns - that link them to organised disinformation units aligned with the Kremlin.

One campaign dubbed Matryoshka, or Operation Overload, is believed to have orchestrated a wave of synthetic videos discrediting Moldova's president, Maia Sandu, during her 2025 election bid.

NewsGuard, an organisation that tracks online disinformation, said it spotted common patterns suggesting the same network was likely behind the video featuring Dr Read.

The operation's name - "matryoshkas" are Russian nesting dolls - mirrors its method, which encases an original false claim in layers of ambient re-posts from old or hacked social media accounts.

Unlike traditional Russian propaganda outlets like media companies RT and Sputnik, which the West swiftly sanctioned at the start of the invasion of Ukraine, such campaigns "allow for a level of... plausible deniability that complicates counter-influence efforts", said Sophie Williams-Dunning, a cyber and tech researcher at the Royal United Services Institute think tank.

Researchers at Clemson University linked a separate network, branded Storm-1516 by Microsoft's Threat Analysis Centre, to veterans of the Kremlin "troll factory" run by Yevgeny Prigozhin, the leader of the paramilitary Wagner group, before his death in 2023.

In an upcoming study seen by the BBC, the academics shared an example of the speed at which fake news travels on social media.

Each time they saw Storm-1516 campaign put out a false narrative about Volodymyr Zelensky being "corrupt", for example, that narrative took over roughly 7.5% of all discussions about the Ukrainian president on X in the following week.

"That is something any marketing company would be proud of," said Darren L. Linvill, one of the paper's authors.