Google Cloud launches two new AI chips to compete with Nvidia

Google Cloud launches two new AI chips to compete with Nvidia

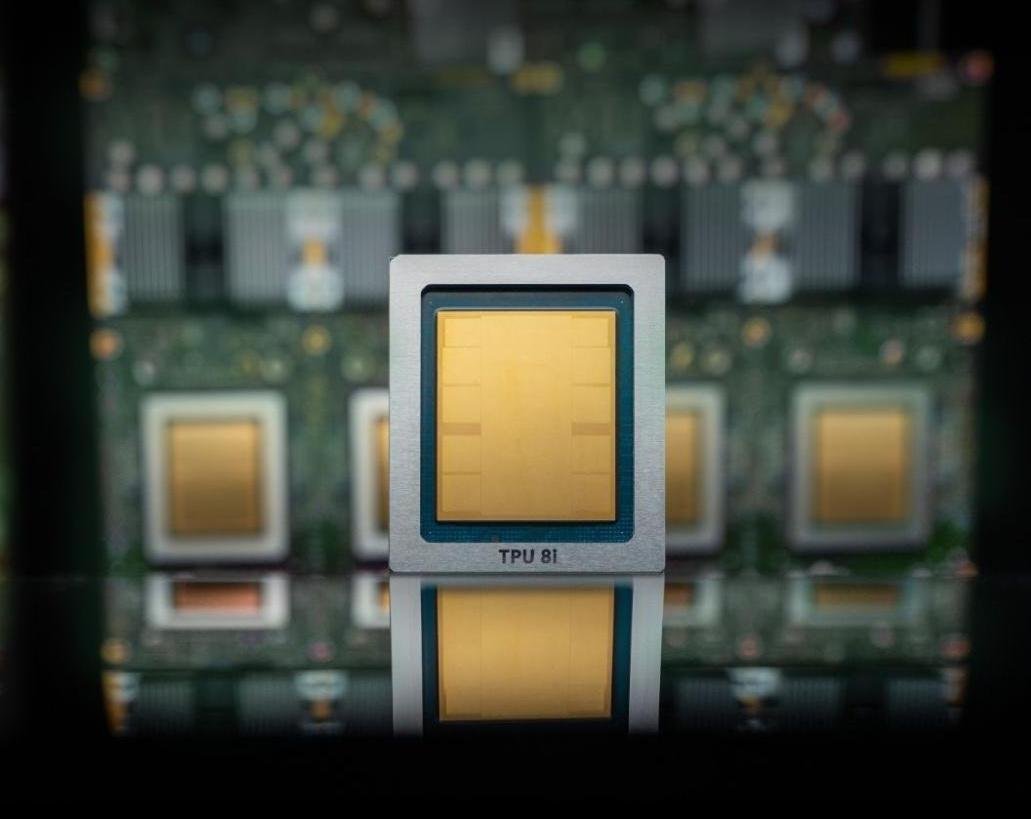

Google Cloud on Wednesday announced that its eighth generation of custom-built AI chips, or tensor processing units (TPUs), will be split in two. One chip, named the TPU 8t, will be geared for model training and another, the TPU 8i, is aimed at inference.

Inference is the ongoing usage of models, aka what happens after users submit prompts.

As you might expect, the company touts some impressive performance specs for these new TPUs compared to the previous generations: up to 3x faster AI model training, 80% better performance per dollar, and the ability to get 1 million+ TPUs to work together in a single cluster. The upshot should be a lot more compute for a lot less energy — and cost to customers — than previous versions. It calls these chips TPUs, not GPUs, because its custom low-power chips were originally named Tensor.

But Google’s chips are not a full frontal assault on Nvidia’s future, at least not yet. Like the other giant cloud providers, including Microsoft and Amazon, Google is using these chips to supplement the Nvidia-based systems it offers in its infrastructure. It is not flat-out replacing Nvidia. In fact, Google promises its cloud will have Nvidia’s latest chip, Vera Rubin, available later this year.

One day the hyperscalers building their own AI chips (which includes Amazon, Microsoft and Google) may grow to need Nvidia less, as enterprises move their AI needs to their clouds and port their apps to these chips.

Still, as things stand today, it’s not profitable to bet against Nvidia. As notable chip market analyst Patrick Moore jokingly posted on X, he had predicted that Google’s TPU could be bad news for Nvidia (and Intel) back in 2016 when the search giant launched its first one. Nvidia is now a nearly $5 trillion market cap company, meaning that prediction didn’t exactly hold up to the test of time.

If all goes according to Nvidia’s plan, Google’s growth as an AI cloud provider would result in more business for the chip maker not less, even if many a workload runs on Google’s chips.

Techcrunch event

San Francisco, CA | October 13-15, 2026

In fact, Google also says it has agreed to work with Nvidia to engineer computer networking that allows Nvidia-based systems to perform even more efficiently in its cloud. In particular, the two tech giants are working to beef up the software-based networking tech called Falcon, which Google created and open sourced in 2023 under the godfather of all open source data center hardware organizations, the Open Compute Project.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.